|

It is highly likely that you will have to test different combinations of steps and assess what the best options are. You have to decide which parts of the raw text should be considered for analysis and determine the shape of these contents in order to have a good input in the analytical process.Īs the difference between useful information and noise is determined by your research question, there is not a fixed list of steps to take that can guide you in this preprocessing stage. Moreover, most of the contents in their original shape will include data that will not be of interest for the analysis but, instead, will produce noise that might negatively affect the quality of the research. In this section we cover typical cleaning steps such as lowercasing and removing punctuation, HTML tags and boilerplate.Īs a computational communication scientist you will come across many sources of text that range from electronic versions of newspapers in HTML to parliamentary speeches in PDF. In Section 5.2.2 we explained how to read data from different formats, such as txt, csv or json that can include textual data, and we also mentioned some of the challenges when reading text (e.g., encoding/decoding from/to Unicode). Such preprocessing ensures that noise is removed, and reduces the amount of data to deal with.

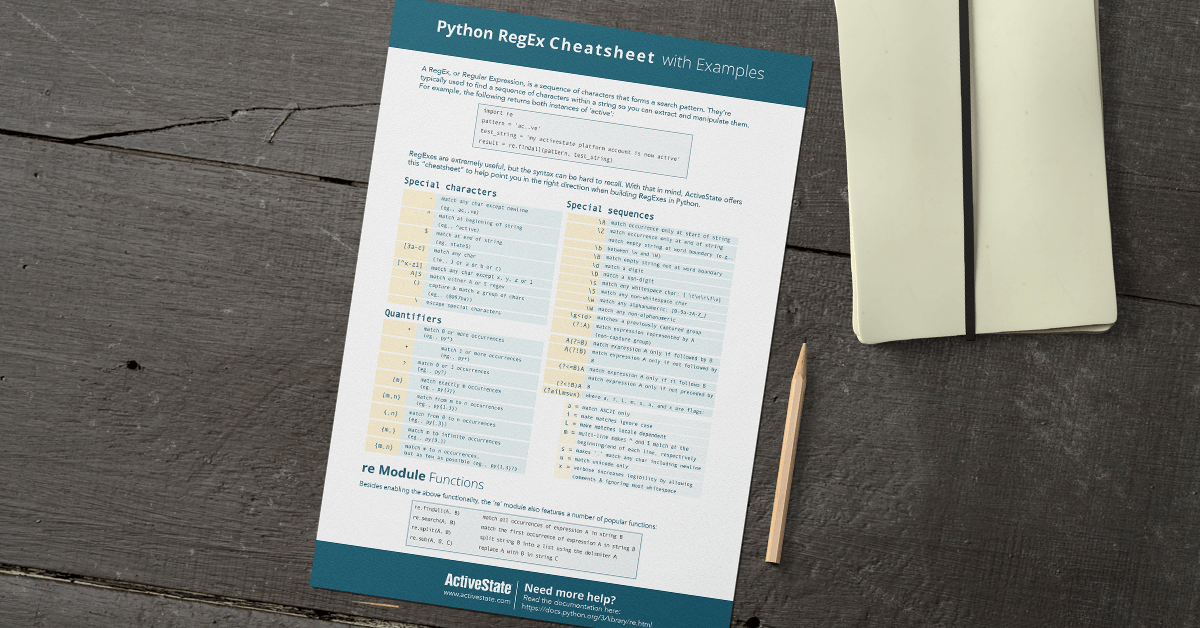

In this exercise we will define a regular expression to match US phone numbers, which mean it has to fit the following pattern: “xxx-xxx-xxxx”.When dealing with textual data, an important step is to normalize the data. Let’s do an example of checking the phone numbers in our dataset. This will return a match object, which can be converted into boolean value using Python built-in method called bool. Here is a basic example of using regular expression import re This method is useful especially when we use pandas, because we want to match the same regex for the whole column values. Then we will use the compiled pattern to match our values.We will compile the pattern. (Compiling helps us to use the same regex variable over and over in our dataset).This way it will match exactly what we specified in our regex.

The caret will tell the pattern to start the pattern match at the beginning of the value, where the dollar sign will tell the pattern to match the end of the pattern. We put are the beginning and dollar sign at the end. Now, we will write expression to match for each of the values. Regular expressions give us a formal way to specify those patterns. We will re library, it is a library mostly used for string pattern matching. We want to find a way to validates these values, and make sure they fit our dataset. Python has built-in methods and libraries to help us accomplish this. Here are some example we can come across in our data: There are many ways monetary values can be represented.

Also making string manipulation is a way to make your datasets more consistent with each other, this helps you to combine and work together with different datasets. String manipulation is a must while data cleaning because most of the world’s data is unstructured text. Then, we will do couple of common examples to practice. Let’s start with understanding what is string manipulation and why it is important. What makes our data more valuable really depends on how much we can get from it. We will get to that in a second.ĭata Science is more about understanding the data, and data cleaning is a very essential part of this process. Regex techniques are mostly used while string manipulating. In this post, we will go over some Regex (Regular Expression) techniques that you can use in your data cleaning process. Using string manipulation to clean strings

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed